Yueci Deng (邓岳慈)

Ph.D. Student, School of Data Science, CUHK-SZ

I am a first-year CS Ph.D. student at the Chinese University of Hong Kong, Shenzhen (CUHK-SZ), supervised by Prof Kui Jia. I received my B.S. from UESTC (2014-2018) and M.S. from NTU, Singapore (2018-2019). Before joining CUHK-SZ, I worked as an architect at DexForce Technology, where I led the development of DexVerseTM, a Sim2Real AI Platform for Embodied Intelligence.

My research interests are mainly in the following areas:

- Systems:

- High-performance, Heterogeneous and GPU-accelerated Simulation Engine Architecture

- Data Generation and Model Training Systems for Embodied Intelligence

- Simulation:

- Generative Simulation

- Differentiable Rendering and Physics

- Neural Representation for Simulation

- Differentiable environments for Analytic Policy Gradients

- Embodied Intelligence:

- Neural Motion Generation for Robot Control

- Physics-Structured Model Architecture

- Online and Continual Learning for Embodied Agents

- Sim2Real Transfer and Domain Adaptation

Collaborations & Opportunities

I welcome research or open source project collaborations on Embodied Intelligence and Simulation Infrastructure. If you’re interested in joint projects, code sharing, student internships, or industry partnerships, please contact me at yuecideng@link.cuhk.edu.cn.

Projects

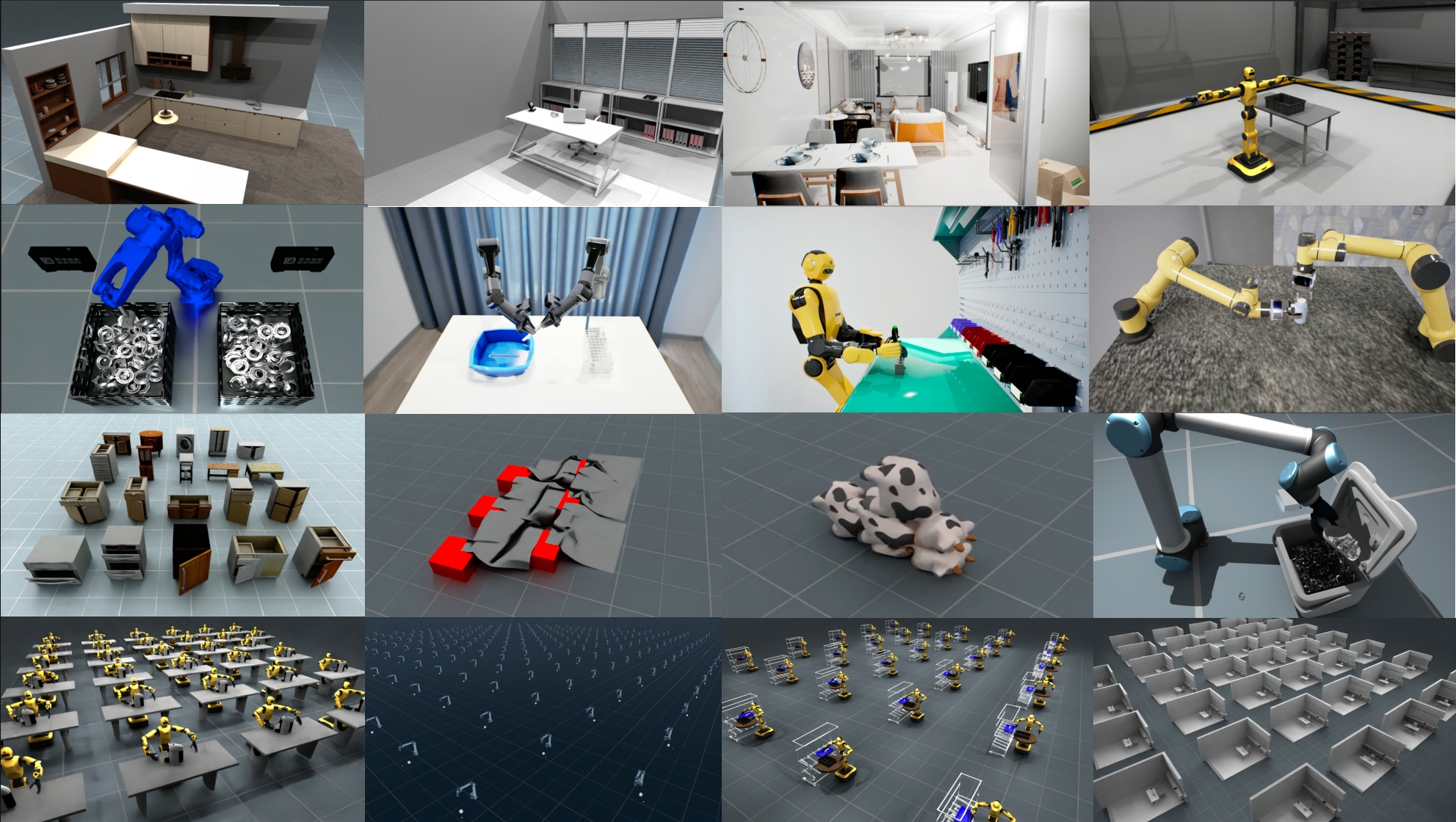

Description: EmbodiChain is a unified, GPU-accelerated framework designed for pushing the boundaries of embodied AI research and development. It integrates high-performance simulation, data collection via automated generative simulation techniques, data scaling pipeline, modular model architectures, and efficient training & evaluation tools. All of these components work seamlessly together to facilitate rapid experimentation and deployment of embodied intelligence and perform Sim2Real transfer into real-world robotic systems.

Description: The leading open-source library for 3D processing with 400K+ monthly downloads from PyPI. Open3D exposes a set of carefully selected data structures and algorithms in both C++ and Python for 3D data processing tasks including point cloud processing, mesh processing, and 3D visualization.

Publications

Description: A lightweight real-world RL method that uses residual RL and affordance-guided rewards to speed up contact-rich robot manipulation learning with minimal human-in-the-loop effort.

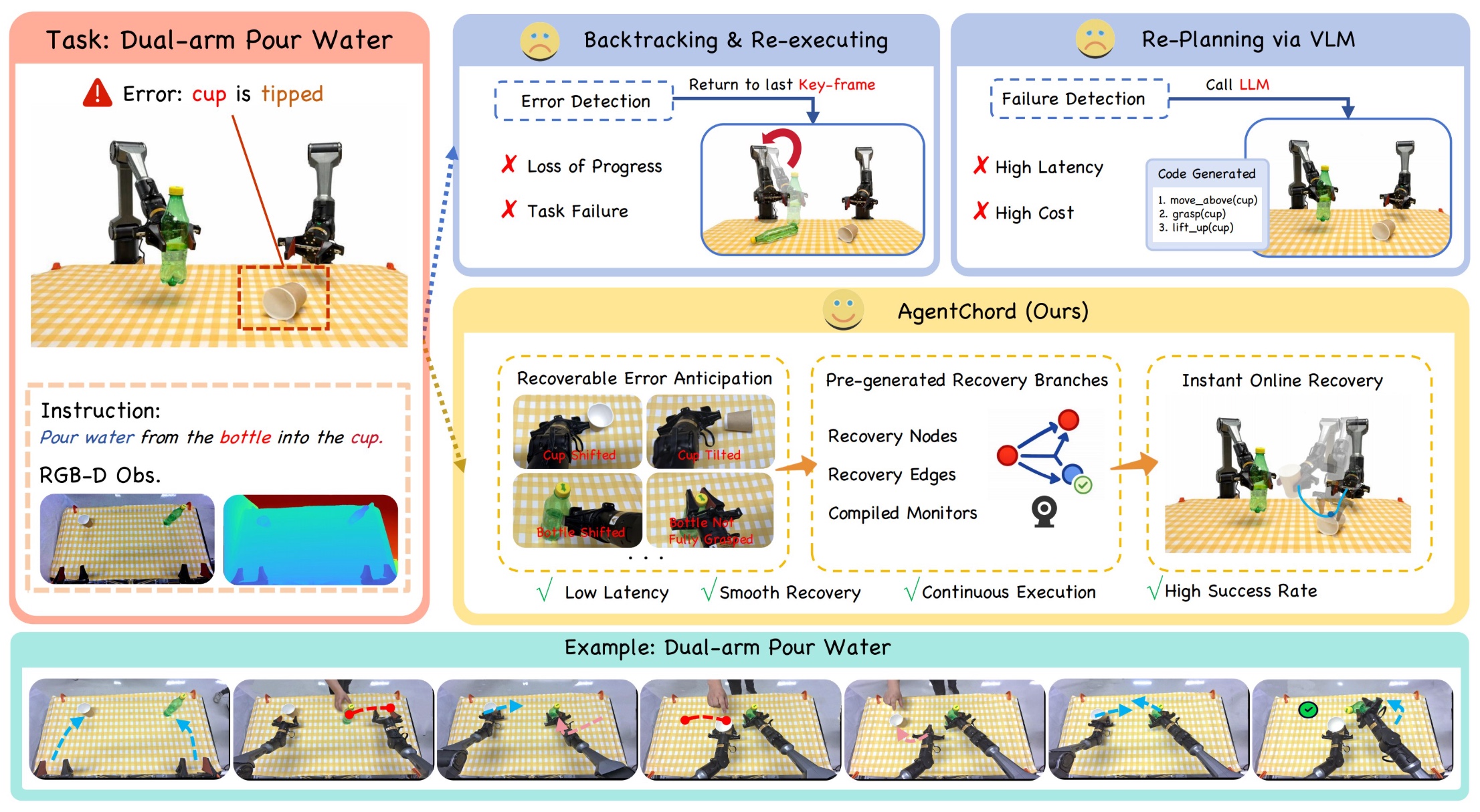

Description: A recovery-aware task-graph system that helps real robots anticipate disturbances, switch to prepared recovery branches, and keep long-horizon manipulation moving.

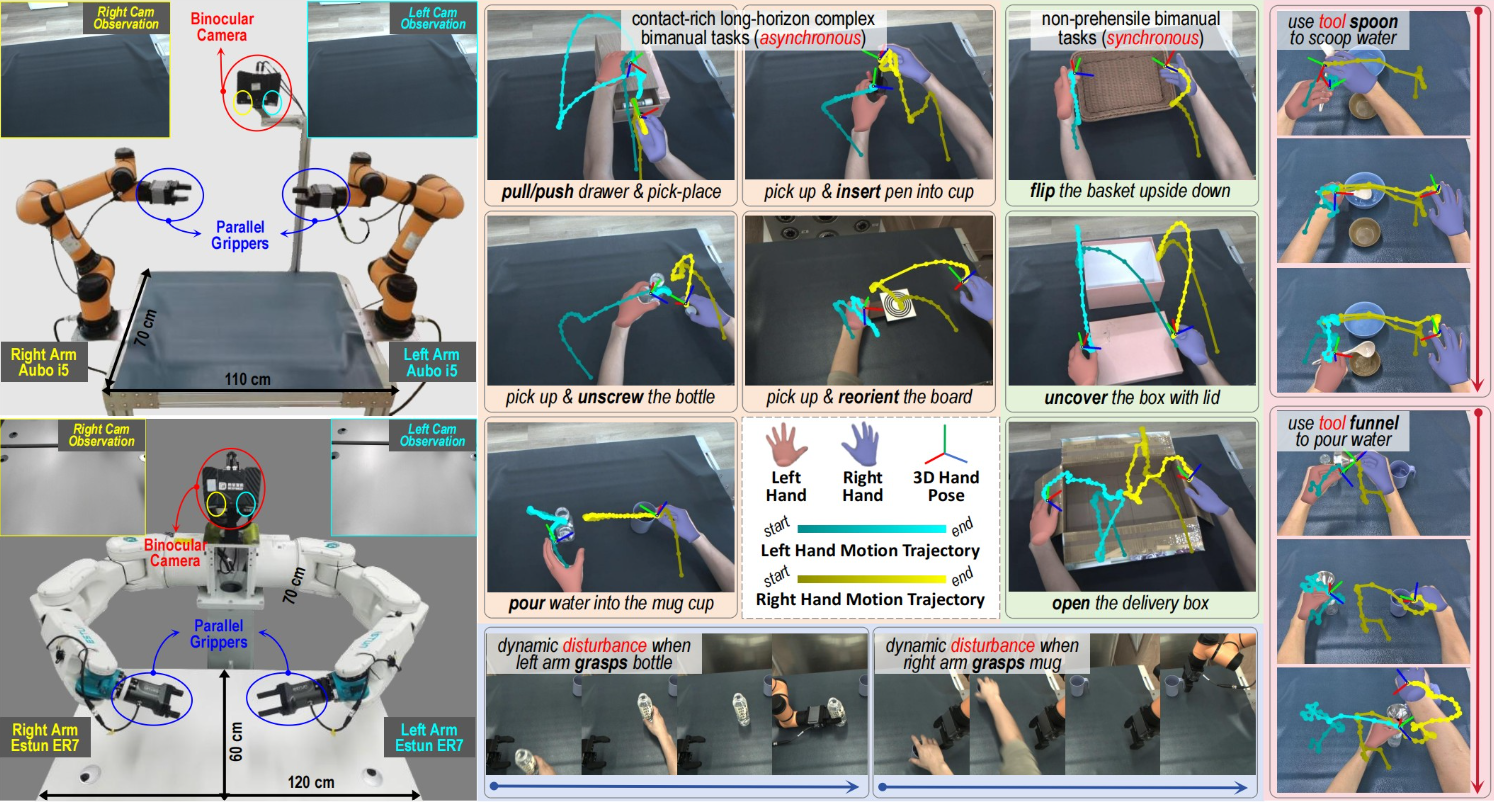

Description: YOTO++ (extended from the conference version You Only Teach Once) enables cross-embodiment deployment (from the contralateral to humanoid dual-arm setups), and facilitates diverse bimanual tasks including asynchronous, synchronous and tool-using scenarios, with closed-loop control under dynamic disturbances during pre-grasping.

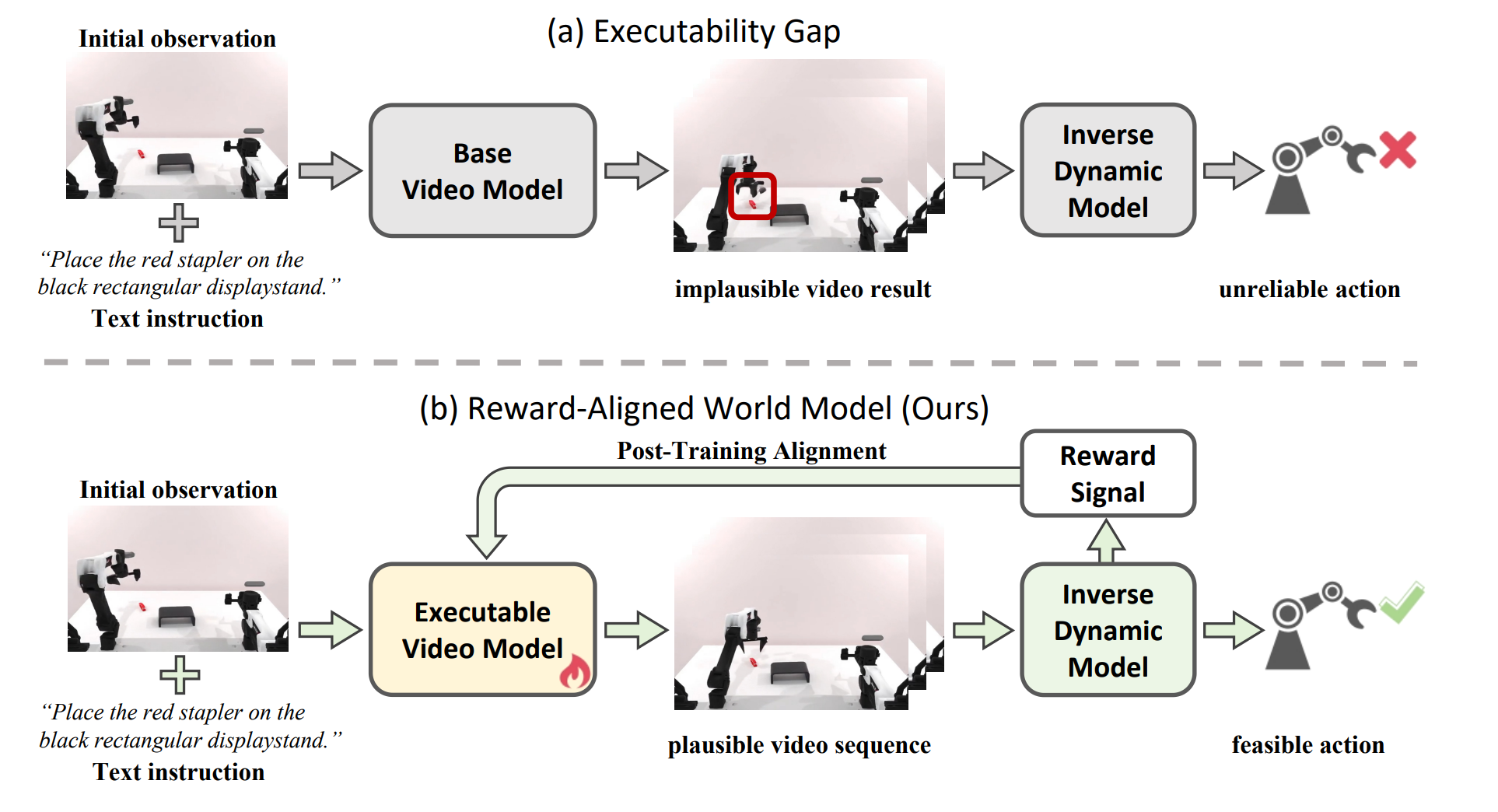

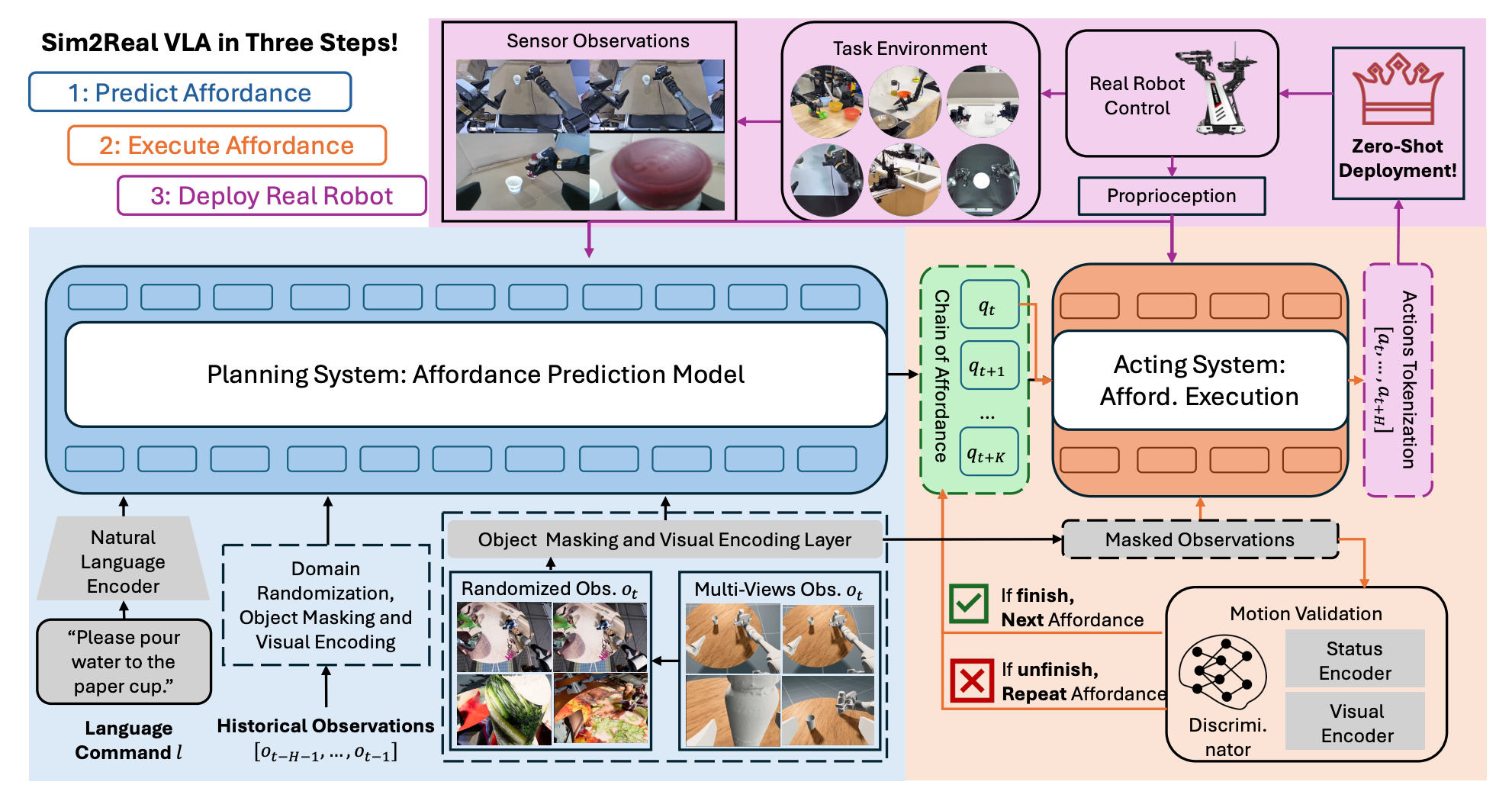

Description: This paper introduces Sim2Real-VLA, a generalist robotic control model that enables zero-shot transfer from synthetic simulation to real-world manipulation tasks.

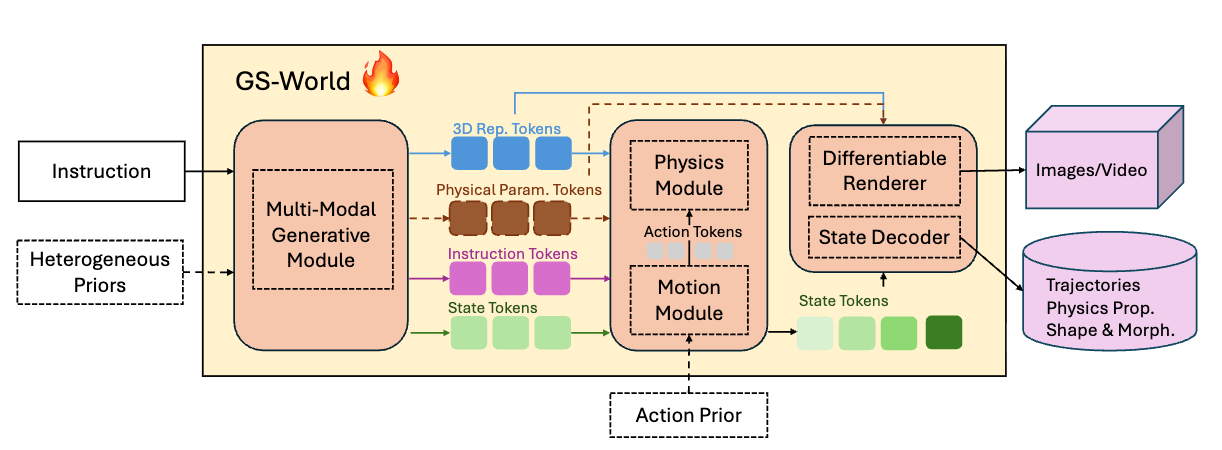

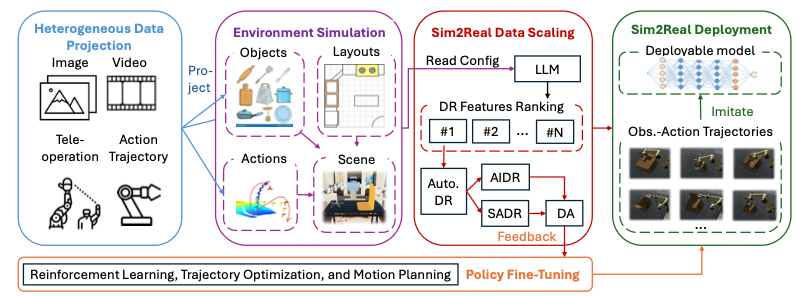

Description: A novel data engine for automating data generation and scaling for sim-to-real transfer of robotic manipulation tasks.

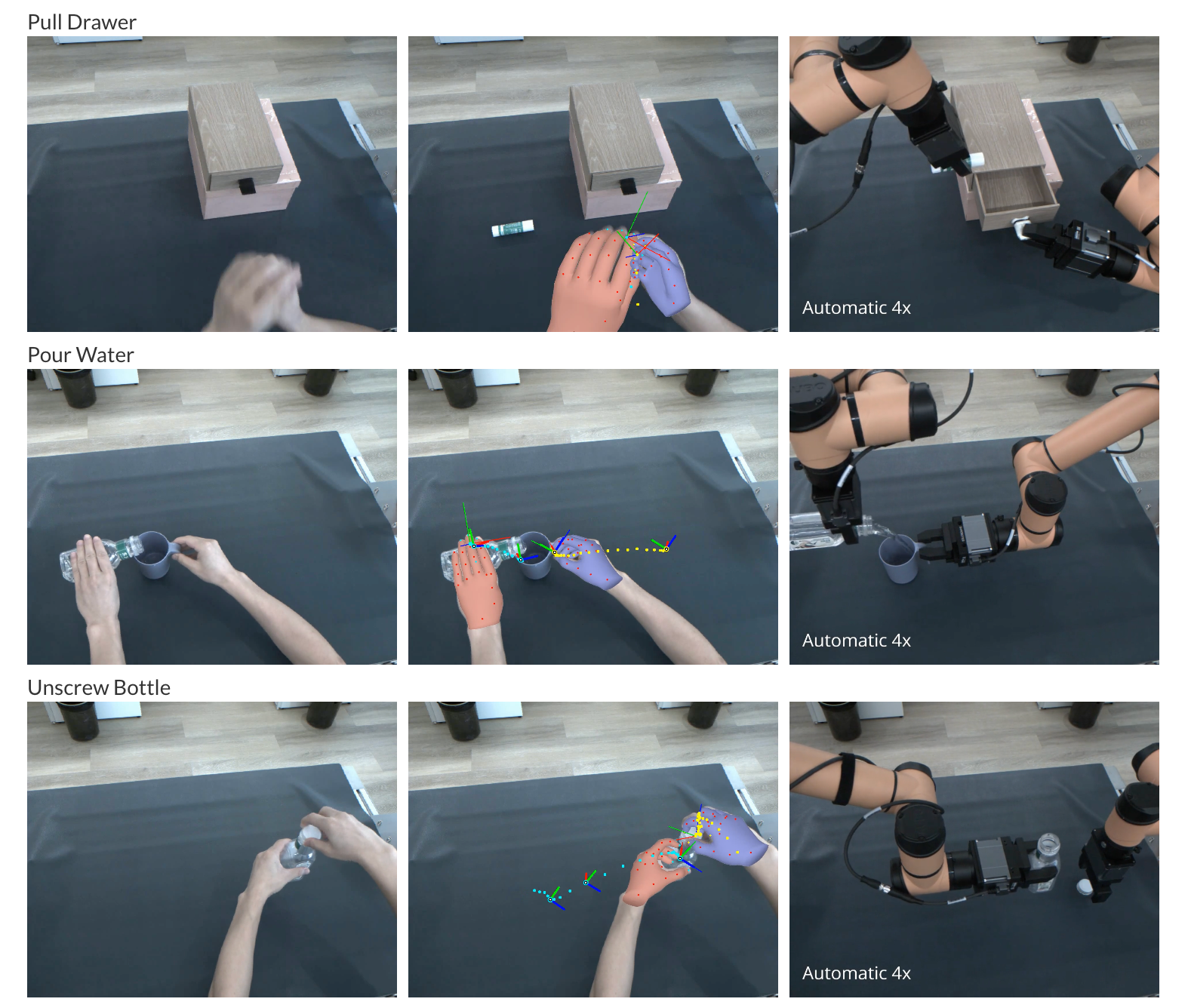

Description: This work proposes the YOTO (You Only Teach Once), which can extract and then inject patterns of bimanual actions from as few as a single binocular observation of hand movements, and teach dual robot arms various complex tasks.